Introduction to Artificial Intelligence Terminology

This articleby Arnaldo Pérez Castaño provides a good introduction to artificial intelligence terminology. An expert system is a branch of artificial intelligence.

First off, what is artificial intelligence? Richard Bellman, from his book Introduction to Artificial Intelligence: Can Computers Think?, provides this definition:

Artificial intelligence is the automation of activities that we associate with human thinking and activities such as decision making, problem solving, learning and so on.

So how do you actually do that? How do you get computers to automate activities? That is what all these terms are about. How to implement the automation of activities in the form of software. Here are some of the terms from the article referenced above that help you get your head around the capabilities of an expert system and how such systems might be implemented in software:

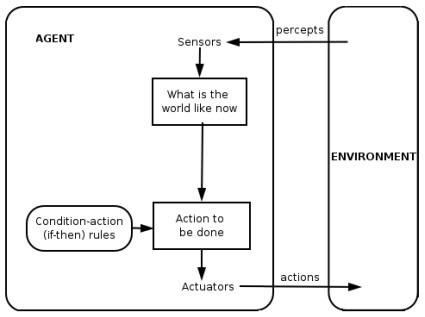

- Agent: An agent is an entity capable of sensing its environment and acting upon it. An agent performs specific tasks on behalf of another. In the case of software, an agent is a software program. The main difference between a software agent and an ordinary program is that a software agent is autonomous; that is, it must operate without direct intervention of humans or others.

- Rational agent: A rational agent is one that acts so as to achieve the best outcome or, when there's uncertainty, the best expected outcome. Rationality as used here refers to making correct inferences and selecting the action that will lead to achieving the desired goal.

- Percept: A percept refers to the agent's perceptual inputs at any given moment.

- Percept sequence: The percept sequence represents the complete sequence of percepts the agent has sensed or perceived during his lifetime.

- Agent function: The agent function represents the agent behavior through a mapping from any percept sequence to an action.

- Reactive agent: A reactive agent is capable of maintaining an ongoing interaction with the environment and responding in a timely fashion to changes that occur in it.

- Pro-active agent: A pro-active agent is capable of taking the initiative; not driven solely by events, but capable of generating goals and acting rationally to achieve them.

- Deliberative agent: A deliberative agent symbolically represents knowledge and makes use of mental notions such as beliefs, intentions, desires, choices and so on. (This is implemented using a belief-desire-intension model.)

- Hybrid agent: A hybrid agent is one that mixes some of all the different architectures.

- Learning agent: A learning agent is one that requires some training to perform well, adapts its current behavior based on previous experiences and evolves over time.

- Non-learning agent: A non-learning agent is one that doesn't evolve or relate to past experiences and is hard coded and independent of its programming.

- Multi-agent system: When an agent coexists in an environment with other agents, perhaps collaborating or competing with them, the system is considered a multi-agent system.

- Coalition: A coalition is any subset of agents in the environment.

- Strategy: A strategy is a function that receives the current state of the environment and outputs the action to be executed by a coalition.

- Blackboard: A blackboard structure is a communication form that consists of a shared resource divided into different areas of the environment where agents can read or write any significant information for their actions.

- Coordination: Coordination is essential in mult-agent system because it provides coherency to the system behavior and contributes to achieving team or coalition goals.

- Cooperation: Cooperation is necessary as a result of complementary skills and the interdependency present among agent actions and the inevitability of satisfying some success criteria.

- Competition: Another possible model is that in which the agents are self-motivated or self-interested agents because each agent has its own goals and might enter into competition with the other agents in the system to achieve these goals. In this sense, competition might refer to accomplishing or distributing certain tasks.

- Negotiation: Negotiation might be seen as the process of identifying interactions based on communication and reasoning regarding the state and intentions of other agents.

Some people group all these into one big group, intelligent agent, but I think having this breakdown helps one understand the capabilities of agents. Many people tend to overstate the capabilities of agents. For example, the article mentions software having a conscience. Saying stuff like that is not helpful and is generally hype. What I am sure of is that agents can perform useful work.

Here is a graphic of an agent:

Want to build an agent? Shoot me an email.

***** MORE ********

Different types of agents video.

Reader Comments