Distinguishing Between Good, Less Good, Bad, and Worse Ontology-like things

In her book An Introduction to Ontology Engineering (PDF page 23), C. Maria Keet, PhD, provides discussion about what constitutes a good and perhaps a not-so-good ontology. There are three categories of errors she discusses:

- Syntax errors: She discusses the notion that a syntax error in an ontology is similar to computer code not being able to compile. For example, when an XBRL processor tells you that your XBRL taxonomy is not valid per the XBRL technical specification.

- Logic errors: She discusses the notion of logical errors within an ontology-like thing which cause the ontology to not work as expected. For example, if you represented something in your XBRL taxonomy as a credit when it should have been a debit.

- Precision and coverage errors: Finally, Keet discusses the notions of precision and coverage when it comes to judging whether an ontology or ontology-like thing is good or bad and provides a set of four graphics that drive this point. Precision can be low or high; coverage can likewise be low or high. For example, when you neglect to represent a rule and therefore something that should not be permissible is permissible because you neglected to include that rule. The fundamental accounting concept relations quality issues result from precision/coverage errors. (Others apparently call this "precision and recall". But I think that term is related to machine learning or pattern-based systems. I am more focused on rules-based systems. In first-order logic they seem to use the terms "sound, complete, and effective".)

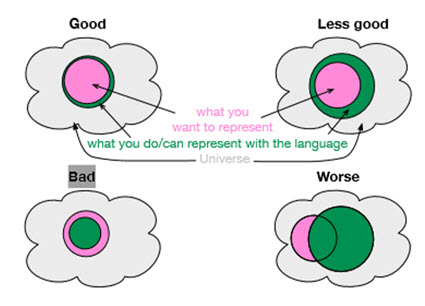

You get a good ontology when the precision of the ontology is high and the coverage of the ontology is high. Precision is a measure of how precisely you do or can represent the information of a domain within an ontology-like thing as contrast to reality. Coverage is a measure of how well you do or can represent a domain of information within an ontology-like thing.

The graphic shown below helps you visualize the notions of precision and coverage. The "universe" (also called the universe of discourse or simply domain) is shown in light gray. The pink indicates the set of what you represented in your ontology-like thing. The green indicates what could possibly be represented using the language you are using (i.e. determined by where the language you are using sits in the ontology spectrum).

If you represent the things that you should represent (i.e. your coverage is good) and you do so such that the ontology-like thing accurately represents reality, then you get a good ontology-like thing. But if an ontology-like thing cannot do what it should be able to do then it is a bad ontology-like thing. And things can go wrong when you have high precision but not enough coverage or if you have low precision with high coverage or things can become really bad if neither your precision nor coverage are what you should have created given the goal you are trying to achieve.

And so, precision and coverage matter when it comes to creating an ontology-like thing. Representing information in machine-understandable for is not something that just happens. It is a lot of work. Balancing the objective you are trying to achieve, the language that you use, what you put into your ontology-like thing, and how well your ontology-like thing represents your universe impacts how well your system will work.

Reader Comments